The Rack: Physical Infrastructure and the Tarot Node Map

The 8U rack build, the PoE cluster, the network topology, the audio network, and the Tarot node map.

8 rack units, 10-inch form factor, sitting on a desk. It houses the agent cluster, the memory server, the monitoring node, all the networking, and a hardwired audio system that lets any machine on the LAN speak through passive speakers mounted on the rack rails.

The whole thing draws under 200W.

The Nodes

Every node in the lab is named after a Tarot card. The full mapping and the reasoning behind it are in part 1. Here’s the physical layout:

| Node | Card | Function |

|---|---|---|

| Threadripper workstation | The Magician | Inference, development, all workloads simultaneously |

| Beelink SER8 | The High Priestess | k3s control plane, Postgres, Matrix, Vault, TTS |

| Pi 4 | The Hermit | Pi-hole DNS, Prometheus, Grafana, rack TUI dashboard |

| Pi5 (wand01) | Ace of Wands | Creative generation, outreach, proposals |

| Pi5 (sword01) | Ace of Swords | Code review, methodology critique, arXiv scans |

| Pi5 (cup01) | Ace of Cups | Literature synthesis, cross-domain connections |

| Pi5 (pentacle01) | Ace of Pentacles | Ops, cost tracking, standups, scheduling |

The Hardware

Rack: GeeekPi RackMate T1, 8U, 10-inch, 7.87-inch depth

Open-frame aluminum and acrylic. The 10-inch form factor forces discipline. Everything that goes in the rack has earned its place.

Parts list, top to bottom (not affiliate links):

- Top of rack: Fosi Audio V3 class D amp

- U1: GeeekPi 1U LCD touchscreen ; rack dashboard display

- U2: GeeekPi 0.5U vented shelves ; Beelink SER8 (The High Priestess) and Pi4 (The Hermit) side by side

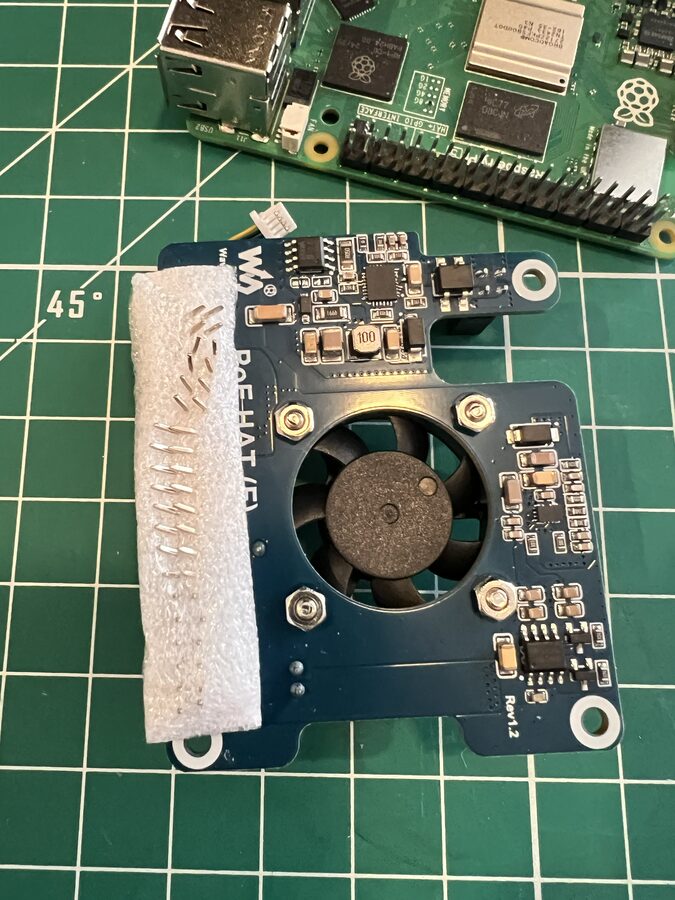

- U3-U4: GeeekPi 2U Pi5 rack mount ; 4x Raspberry Pi 5 8GB with Waveshare PoE HAT (F)

- U5: TP-Link TL-SG1210MP in Blazin3D 10-inch adapter ; 8-port PoE switch, powers all Pi5s

- U6: Enmane 12-port CAT6a patch panel ; all connections terminate here. Ports labeled: WAND, SWORD, CUP, PENTACLE, PRIESTESS, HERMIT, SPEAKER, UPLINK, MAGUS, plus spares.

- U7: NICGIGA 10GbE switch (2x 10G + 4x 2.5G) facing the rear ; router ingress and 10G uplink to the workstation

- U8: ElecVoztile 1U PDU ; 8 rear outlets, surge protection

- Speakers: Pure Resonance MC2.5B on swivel brackets, mounted to the back rack rails

- Cabling: Rapink Cat6a 0.5ft and 1ft patch cables, banana plugs, speaker wire

Network

All connections are hardwired CAT6a. No WiFi in the infrastructure stack. 10Gb fiber from the ISP into the router, 10GbE from the router into the NICGIGA switch, 10G from the switch to the workstation. The whole path from the internet to the GPUs is 10 gigabit. All inter-node addressing runs over a Tailscale mesh VPN – every node gets a stable 100.x.x.x IP and a MagicDNS hostname, so ssh reed@priestess works from anywhere on the tailnet.

Internet (10Gb fiber)

│

[Router]

│

[NICGIGA 10GbE]

├── 10G port → magus (workstation)

├── 2.5G port → TP-Link uplink

└── 2.5G port → priestess (SER8) direct path

[TP-Link PoE, U5]

├── PoE → wand01 (Pi5, Tailscale: 100.xx.xx.xx)

├── PoE → sword01 (Pi5, Tailscale: 100.xx.xx.xx)

├── PoE → cup01 (Pi5, Tailscale: 100.xx.xx.xx)

├── PoE → pentacle01 (Pi5, Tailscale: 100.xx.xx.xx)

├── port → priestess (SER8)

├── port → hermit (Pi4)

└── port → audio amp

The PoE hats on all 4 Pi5s eliminate wall adapters entirely. Data and power over the same patch cable.

The 10GbE spine is why the workstation matters to the cluster. When agents call local inference, they’re hitting a 10G link to a machine with 24GB of GPU VRAM. Barely different from a local socket.

Pi-hole runs on the Hermit as the global DNS for the tailnet, overriding upstream DNS via the Tailscale admin console. Every node on the mesh uses it automatically – no per-node config. A custom local record maps attune.matrix to the priestess so all Matrix traffic resolves internally. If the Hermit goes down, Tailscale falls back to 1.1.1.1.

The Audio Network

The goal was simple. Any machine on the LAN sends audio to the passive speakers, no physical audio cables from source machines.

The SER8 runs a TTS endpoint that any node on the Tailscale mesh can hit as a tool call. Send it text or a stream, it runs real-time TTS and plays through the speakers. The SER8’s 3.5mm out goes to a Fosi Audio V3 class D amp via RCA, which drives the Pure Resonance speakers through banana plugs.

Any node on Tailscale mesh

→ tool call to SER8 TTS endpoint

→ SER8 runs Kokoro TTS

→ 3.5mm out → RCA → Fosi Audio V3

→ banana plugs → speaker wire

→ Pure Resonance MC2.5B (on rack rail brackets)

pentacle01 reads the morning standup aloud. The lab has a voice.

The SER8: The High Priestess

The SER8 does more work than anything in the rack. Memory, communication, coordination, secrets, and the k3s control plane that schedules work onto the Pi5 cluster all live here.

Ryzen 7 8845HS with a Radeon 780M integrated GPU, enough for Kokoro TTS at real-time speed. 2 M.2 slots: the boot drive and a 2TB expansion drive mounted at /data where Postgres, the Obsidian vault, and KuzuDB all live. Rebuilt from scratch as an Arch Linux server.

PostgreSQL is the collective’s ground truth. Every agent has a memory namespace in agent_memory with pgvector embeddings for semantic recall. pentacle01 tracks every task, every standup, every health check in structured tables. cup01 stores synthesis notes and resonance connections. sword01 logs review verdicts and open technical concerns. When pentacle01 generates the daily standup at 8am, it queries the last 24 hours of collective activity out of Postgres: tasks shipped, PRs opened, what’s blocked, what needs my attention.

Matrix Synapse is how the agents talk. Locally hosted at attune.matrix, self-signed TLS, no federation (this is a private network). All 4 agents and I share a room called #attune-collective. wand01 pitches blog post ideas there on Thursdays. sword01 posts PR review summaries. cup01 drops literature resonance notes. pentacle01 reads the morning standup aloud through the speakers and posts the text to the channel. I follow the conversation in Element on the workstation or on my phone via Tailscale. All 4 agent accounts are created with E2EE.

HashiCorp Vault runs as a k3s pod with Raft storage for secrets management. API keys, agent tokens, and database credentials get synced to k3s Secrets via External Secrets Operator. Unsealing is handled by an age-encrypted script; bootstrap secrets live in 1Password.

The agents run on a fork of hermes-agent from Nous Research. I submitted a PR to add Matrix gateway support with E2EE. It was passed over in favor of another implementation, but Matrix is now supported on main. I maintain my fork for the specific needs of this collective.

My bias is always to build from scratch, but the harness is commoditizing. Hermes-agent solves enough of the hard problems that forking it and focusing on the domain-specific parts is the right call.

The Pi4: The Hermit

Recycled Pi 4 running Raspberry Pi OS. It doesn’t participate in the work. It watches.

When 4 agents are running 24/7 on their own heartbeat cycles, making inference calls, writing to Postgres, posting to Matrix, opening PRs, you need to see what’s happening without asking. pentacle01 tracks task-level health from the inside. The Hermit tracks system-level health from the outside: is the GPU overheating, is Postgres running out of connections, is a Pi5 pod stuck in a restart loop, is Pi-hole blocking what it should be blocking.

The Hermit runs Pi-hole, Prometheus, and Grafana as a Docker Compose stack, plus a custom Python/Rich TUI dashboard on the 1U rack-mounted touchscreen (1424x280, 178x16 characters). All five services stay up across reboots via restart: unless-stopped on the containers and a systemd unit for the Compose stack.

# docker-compose.yml (abbreviated)

services:

pihole: # port 53/80 -- DNS for the tailnet

pihole-exporter: # port 9666 -- custom Pi-hole v6 API exporter

node-exporter: # port 9100 -- host metrics

prometheus: # port 9090 -- 30d retention, scrapes all 7 nodes via Tailscale IPs

grafana: # port 3000 -- 8 provisioned dashboards

Prometheus scrapes every node on the tailnet at 100.x.x.x:9100 (node-exporter), plus the GPU exporter on magus (:9835), the Postgres exporter on priestess (:9187), and the Pi-hole exporter locally (:9666). Eight Grafana dashboards are provisioned as JSON – one per node plus aggregate views for the k3s cluster, GPU, Postgres, and Pi-hole.

The TUI dashboard runs outside Docker in a host venv – it needs a real pty for the touchscreen dimensions and reads raw touch events from /dev/input to advance between 5 tap-navigable views: overview (all 7 nodes), compute (magus GPU detail), database (priestess Postgres), cluster (4x Pi5 agents), and network (Pi-hole DNS stats). Wayfire auto-launches it into a fullscreen foot terminal on boot.

The k3s Cluster

Each agent runs as a k3s pod pinned to its own Pi5. That’s the whole point of the cluster: each cognitive mode gets its own physical hardware, its own memory ceiling, its own failure domain. If sword01’s pod crashes while reviewing a PR, cup01’s literature synthesis keeps running on a different board. pentacle01 notices the restart, logs it, and posts to #attune-collective.

Priestess runs the k3s server (control plane). The 4 Pi5s join as worker nodes over Tailscale. Traefik is disabled; there’s no ingress, just internal services.

# Control plane (priestess, Arch Linux):

curl -sfL https://get.k3s.io | sh -s - server \

--disable traefik \

--cluster-cidr 10.42.0.0/16 \

--service-cidr 10.43.0.0/16

# Worker nodes (each Pi5, Raspberry Pi OS):

curl -sfL https://get.k3s.io | \

K3S_URL=https://100.xx.xx.xx:6443 \

K3S_TOKEN=<token> sh -

The Pi5s need cgroup_memory=1 cgroup_enable=memory appended to /boot/firmware/cmdline.txt before k3s will start – Raspberry Pi OS doesn’t enable memory cgroups by default.

Each node gets identity labels. When a deployment manifest says nodeSelector: attune/suit=wands, that pod only ever runs on wand01’s physical Pi5. The agent’s identity is bolted to hardware.

kubectl label node wand01 attune/suit=wands attune/element=fire

kubectl label node sword01 attune/suit=swords attune/element=air

kubectl label node cup01 attune/suit=cups attune/element=water

kubectl label node pentacle01 attune/suit=pentacles attune/element=earth

I run kubectl from the workstation with a kubeconfig pointing at priestess over Tailscale. The Pi5s are headless – I rarely SSH into them directly. When I do, it’s usually to check journalctl -u k3s-agent.

Building It

Assembly order matters in a rack this tight. A few things I learned the hard way:

One of the Pi5 PoE hats arrived with horribly bent pins. Mauled in shipping. I straightened them with needle-nose pliers and it’s been running fine since, but that was a tense 20 minutes.

The bottom 2 rack units (PDU and shelves) are a rat’s nest of power cables, surge protectors, and DC adapters crammed into very little space. It works, but it’s the part of the build I’m least proud of.

Getting all the nodes talking was its own project. The Pi5s and Pi4 run Raspberry Pi OS; the workstation and SER8 run Arch. Wiring everything into a Tailscale mesh, getting k3s to form a proper cluster across the nodes, standing up Matrix with self-signed TLS – each step uncovered something the previous step assumed.

Build order:

- Mount the PDU first (U8); all AC power terminates here

- Install the brush cable manager (U7); gives you routing room before anything sits above it

- Mount the patch panel (U6); the reference point everything else wires to

- Mount the PoE switch (U5) before the Pi cluster, because PoE cables route upward

- Install the Pi5 2U mount (U3-U4); wire each Pi5 to the patch panel before closing

- Install shelves (U2) for the SER8 and Pi4

- Mount the LCD touchscreen (U1)

- Run and dress all cables

- Place the Fosi Audio V3 on top last, after everything else is stable

Speakers go on last, mounted to the back rack rails once the cable runs are dressed.

What’s Next

The rack is built. The network works. Matrix is live, Postgres is running, the Pi5s are enrolled in the cluster. The infrastructure is waiting for the agents to wake up on it.

Reach me on X if you have questions or want to compare notes.